libvirt: Supporting multiple vGPU types for a single pGPU¶

https://blueprints.launchpad.net/nova/+spec/vgpu-multiple-types

Virtual GPUs in Nova was implemented in Queens but only with one supported GPU type per compute node. Now that GPUs are created as Nested Resource Providers this spec is only targeting about how to expose some operator choice for telling which GPU type should be set per GPU.

Note

As Xen provides a specific feature where physical GPUs supporting same vGPU type are within a single pGPU group, that virt driver doesn’t need to know which exact pGPUs need to support a specific type, hence this spec only targets the libvirt driver.

Problem description¶

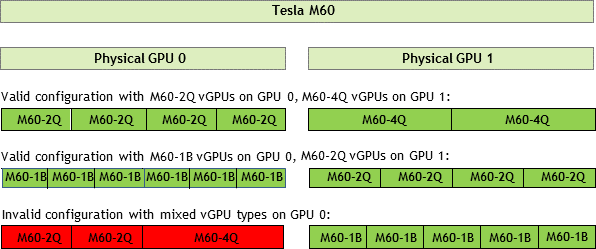

As of now, although multiple GPU types can be proposed for the users, there is a current limitation where a single GPU device can only accept one type for all the virtual devices per graphical processing unit.

For example, NVidia GRID physical cards can accept a list of different GPU types, but the driver can only support one type per physical GPU.

Consequently, we require a way to instruct the libvirt driver which vGPU types an NVIDIA or Intel physical GPU is configured to accept.

Use Cases¶

An operator needs a way to inform the libvirt driver which vGPU types an NVIDIA or Intel physical GPU is configured to accept.

Proposed change¶

We already have [devices]/enabled_vgpu_types that define which types the

Nova compute node can use:

[devices]

enabled_vgpu_types = [str_vgpu_type_1, str_vgpu_type_2, ...]

Now we propose that libvirt will accept configuration sections that are related

to the [devices]/enabled_vgpu_types and specifies which exact pGPUs are

related to the enabled vGPU types and will have a device_addresses option

defined like this:

cfg.ListOpt('device_addresses',

default=[],

help="""

List of physical PCI addresses to associate with a specific GPU type.

The particular physical GPU device address needs to be mapped to the vendor

vGPU type which that physical GPU is configured to accept. In order to

provide this mapping, there will be a CONF section with a name corresponding

to the following template: "vgpu_type_%(vgpu_type_name)s

The vGPU type to associate with the PCI devices has to be the section name

prefixed by ``vgpu_``. For example, for 'nvidia-11', you would declare

``[vgpu_nvidia-11]/device_addresses``.

Each vGPU type also has to be declared in ``[devices]/enabled_vgpu_types``.

Related options:

* ``[devices]/enabled_vgpu_types``

"""),

For example, it would be set in nova.conf:

[devices]

enabled_vgpu_types = nvidia-35,nvidia-36

[vgpu_nvidia-35]

device_addresses = 0000:84:00.0,0000:85:00.0

[vgpu_nvidia-36]

device_addresses = 0000:86:00.0

Note

This proposal is very similar to the existing dynamic options we have with NUMA-aware vSwitches.

In that case, the nvidia-35 vGPU type would be supported by the physical

GPUs that are in the PCI addresses 0000:84:00.0 and 0000:85:00.0, while

nvidia-36 vGPU would only be supported by 0000:86:00.0.

If some operator messes up and provides two types for the same pGPU, an

InvalidLibvirtGPUConfig exception will be raised. If the operator forgets

to provide a type for a specific pGPU, then the first type given in

enabled_vgpu_types will be supported, like the existing situation.

If the operator fat-fingers the PCI IDs, then when creating the inventory, it

will return an exception.

As one single compute could now support multiple vGPU types, asking operators

to provide host aggregates for grouping computes having the same vGPU type

becomes irrelevant. Instead, we need to ask operators to amend their flavors

for specific GPU capabilities if they care of such things, or Placement will

just randomly pick one of the available vGPU types.

For this, we propose to standardize GPU capabilities that are unfortunately

very vendor specific (eg. a CUDA library version support) by having a

nova.virt.vgpu_capabilities module that would translate a vendor-specific

vGPU type into a set of os-traits traits.

If operators want vendor-specific traits, it’s their responsibility to provide

custom traits on the resource providers or ask the community to find a standard

trait that would fit their needs.

Alternatives¶

We could ask the operators to provide those details into a Provider Configuration File by adding some additional information that would be libvirt-specific and telling which GPU type to use for a specific Resource Provider. That said, this would require us to amend the YAML schema to allow some extra random parameter to be available which would be libvirt-specific and would defeat the purpose of the Provider Configuration File to be as much generic as possible. It’s also worth saying that a GPU type is not a trait, as it defines quantitative amount of virtual resources to allocate for a matching physical GPU.

Data model impact¶

None

REST API impact¶

None.

Security impact¶

None.

Notifications impact¶

None.

Other end user impact¶

None.

Performance Impact¶

None.

Other deployer impact¶

Operators need to either look at the sysfs (for libvirt) for knowing the existing pGPUs and which types are supported.

Developer impact¶

None.

Upgrade impact¶

None.

Implementation¶

Assignee(s)¶

- Primary assignee:

bauzas

- Other contributors:

None

Feature Liaison¶

None

Work Items¶

Create the config option

Modify the libvirt virt driver code to make use of that option for creating the nested Resource Provider inventories.

Dependencies¶

None.

Testing¶

Classic unittests and functional tests.

Documentation Impact¶

A release note will be added with a ‘feature’ section, and the Virtual GPU documentation will be modified to explain the new feature.

References¶

None.

History¶

Release Name |

Description |

|---|---|

Rocky |

Approved |

Stein |

Reproposed |

Ussuri |

Reproposed |